Fake accounts have been around for years. They are commonly used to anonymously request info, avoid spam from an online merchant or participate anonymously in a conversation. Initially, fake accounts were easy to create manually, but over time the process became automated. More recently, fake accounts (aka bot or spam accounts) used to deliver a message or publish information automatically have become a sticking point in the $44 billion acquisition of Twitter by Elon Musk.

How Common are Fake Accounts?

Most of our digital interactions require an account – whether it is a one-time purchase from a retailer, your favorite social media platform, or access to technical product information – they all require an account to access the respective content or capability. Today, with so much of our lives managed behind rich, account-based application platforms, fake account abuse is commonplace. For example, to establish a valid Gmail account, Google has implemented a series of requirements, many of which can be bypassed through known Gmail farming techniques. Every social media and dating platform have a fake account challenge. Between October and December 2022, Facebook blocked 1.3 billion accounts and the company believes that the number of fake profiles has dropped by 23% year over year. At the same time, estimates show that roughly 5% of Facebook profiles are fake, a whopping 142 million users.

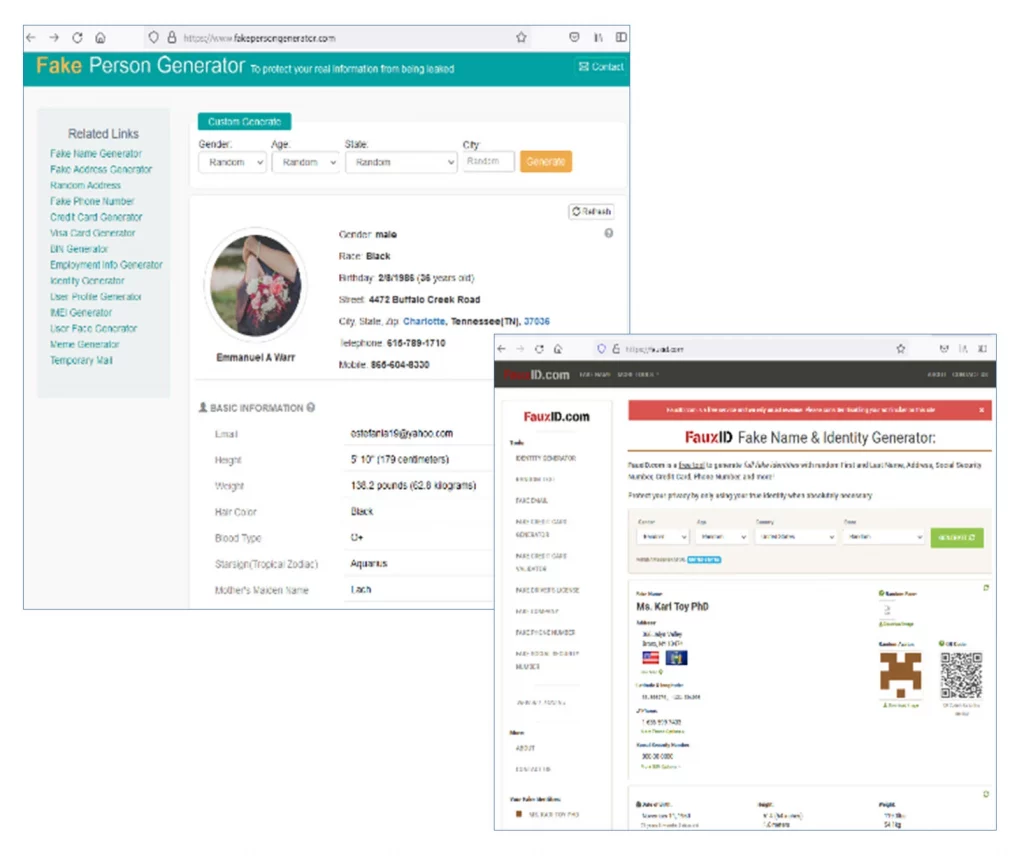

Fake accounts are so commonly used, there are software tools that automate the creation to “mask your identity or generate status”. In a real-life use case, a 19-year-old college student used software to fabricate fake accounts as a means of generating 7.7 million [fake] comments on net neutrality. A web search quickly uncovered several offerings, both of which give the visitor a fake identity which can then be used to create fake accounts.

One of the most significant challenges with separating real from fake accounts is the fact that establishing a new account appears to be a legitimate transaction and is the lifeblood of account-based application platforms where the users (you and I) are the product. For social media companies like Facebook and Twitter, their valuation is tied to the number of users they have, making the battle against fake accounts one that has a direct impact on their financial results.

The Global Impact of Fake Accounts

Whether the $44 billion price tag Elon Musk has placed on fake accounts is real or not remains to be seen, but the fact of the matter is fake accounts have wide ranging impacts on our day-to-day lives.

- Fraudulent Covid Relief: The US Secret Service announced the return of roughly $2 billion in fraudulent Covid relief funds, generated using fake accounts.

- Disinformation: It’s documented that Facebook has been used to influence political outcomes and they are continually removing fake accounts to stem the flow of fake news.

- Fake Reviews: Studies show that as many as 89% of us trust online reviews as much as personal recommendations, which in turn, can result in a significant increase (or decrease) in revenue for the vendor. Using fake accounts to enhance product reputation on Amazon or Yelp is a practice that has become so commonplace, it has become its own economy with merchants who will create reviews for you, and organizations who will analyze the reviews for you. Unfortunately, fake reviews for mediocre products are so commonplace that the World Economic Forum estimates that they drove roughly $152 billion in global spending on lackluster products and services in 2020.

- Financial Fraud: In the financial services market, fake account creation, also referred to as synthetic identities average between $81-$97,000 in financial losses per incident when successful.

The Impact on Enterprise Business

In a 2021 Forrester report on the business impact of bots, 26% of the 425 respondents indicated that they had lost as much as 10% of their revenue due to account abuse, which includes fake account creation and account takeover. A key takeaway from the report is that bots are not a security or ecommerce only problem – they are a business problem, impacting a number of different groups within the organization.

- Infrastructure: Each user account consumes infrastructure costs and when fake accounts are used in large scale shopping bot attacks, infrastructure costs can skyrocket. Worse yet, the web site and mobile app can become non-responsive, resulting in (real) user dissatisfaction.

- Security and Fraud: Security is impacted by efforts to slow or stop the use of fake accounts, often struggling to separate real from fake. Fraud teams are often engaged directly, investigating individual accounts, issuing account reset or delete recommendations. All of which consumes time that could (and should) be spent on broader team goals.

- Marketing and Sales: On platforms where the users are the product, a large population of fake accounts may generate inflated marketing statistics which in turn can lead to poor or misleading sales and program decisions.

- Customer Satisfaction: Losing out on a purchase of a high demand item as a result of a fake account driven bot can lead to a frustrating user experience, leading some buyers to shop elsewhere. From the Forrester report, every 1-point improvement in a retailers’ CX (customer experience) score, they can anticipate a $523 million increase in incremental revenue.

- PR and Brand: whether it’s Twitter, Facebook, your favorite shopping site or your financial management app, inaction by platforms to address the fake account problem can impact a company’s brand in negative ways. According to Accenture, 57% of consumers spend more on brands to which they are loyal, which can generate a 12%-18% incremental revenue growth per year than non-members. Research also shows that acquiring a new customer can cost 5 times more than the cost of retaining an existing one.

What Can Be Done to Stop Fake Accounts? Try Cequence

Taking action against fake account creation is a challenging game of cat and mouse between the application owner and the bad actor. Attackers target the account registration API directly, using automated CAPTCHA solvers and bypassing traditional defense mechanisms by continuously evolving, using human farms, adjusting in language, frequency of posting, and location to continue their fraudulent efforts.

The Unified API Protection (UAP) platform protects your applications and APIs from attackers, eliminating unknown and unmitigated API security risks that lead to data loss, fraud, and business disruption. A patented machine learning analytics engine works behind the scenes, built upon the largest database of API threat behaviors in the world, separating legitimate transactions from malicious, creating a fingerprint of known-good (accounts) that can then be used for policy-based mitigation. Learn more about our unique approach to fake account prevention with a personalized demo.